Copilot Cowork matters because it turns a fuzzy AI promise into something easier to picture at work. Microsoft’s March 30 and March 31, 2026 updates describe a setup that can plan multistep work, act across files and conversations, and pair that process with Researcher features that compare and refine outputs across models.

The useful shift is not simply that “AI teammates are here.” It is that Microsoft is trying to turn AI help into a repeatable workflow with planning, research, review, and human approval points. That is a much better fit for real work than another chatbot that gives a clever answer and leaves the rest to the user.

- Microsoft says Copilot Cowork can plan steps and take actions across files and conversations for long-running, multistep work.

- Researcher now adds two multi-model capabilities, Critique and Council, to improve accuracy, depth, and confidence.

- This is not full automation. The strongest use case is assisted execution with human approval, not unattended autonomy.

Table of Contents

- Workflow Overview

- Architecture and Components

- Step-by-Step Flow

- Failure Modes

- Human-in-the-Loop Points

- Why Copilot Cowork Matters

- FAQ

- Related Reading

- Source

Workflow Overview

The cleanest way to understand Copilot Cowork is to stop imagining “one smarter chat response” and start imagining a small operating loop.

- A user describes the outcome they want.

- Copilot Cowork plans the steps.

- It works across files and conversations to gather what it needs.

- Researcher can expand the evidence-gathering phase.

- Critique or Council can review, compare, or refine the work before a user accepts it.

That sequence matters because it turns AI from a reply engine into a workflow engine. The user is still in charge, but the tool is doing more of the coordination work that previously sat between tabs, documents, messages, and manual checklists.

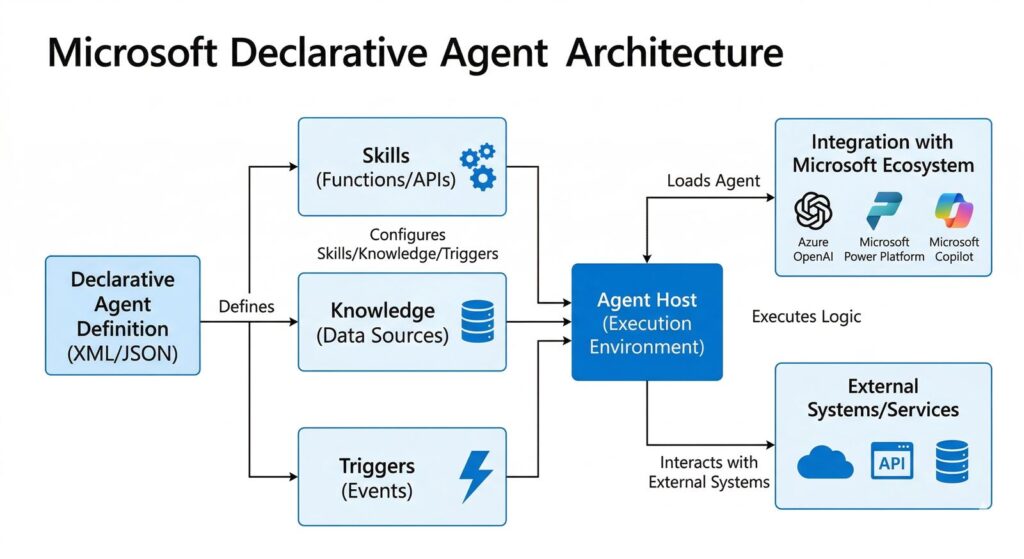

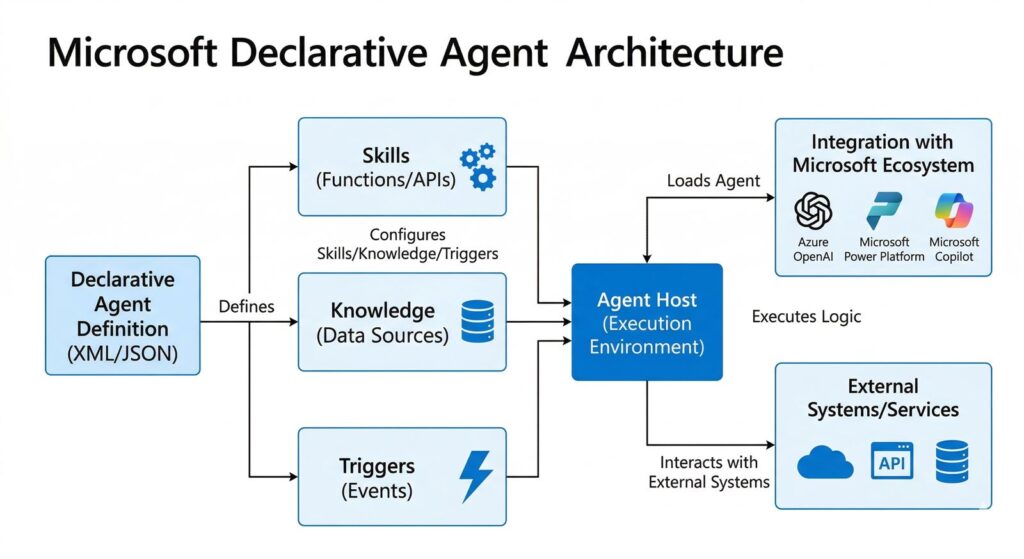

Architecture and Components

Microsoft’s official materials give us a practical component map.

Copilot Cowork

On Microsoft’s Frontier features page, Copilot Cowork is described as a way to “go from to dos to done.” The key behavior is that a user describes an outcome, and Cowork can help plan steps and take actions across files and conversations for long-running, multistep work. That is the orchestration layer.

Researcher

Researcher is the deeper analysis layer. Microsoft says it is designed for complex research in the flow of work, which makes it the best fit for questions that need document review, synthesis, and evidence gathering rather than a short answer.

Critique

According to Microsoft’s March 30, 2026 post, Critique separates generation from evaluation. One model drives the initial research and drafting process, while another model reviews and refines before a final report is produced. That matters because a lot of agent failures happen when the same system generates and rubber-stamps its own work.

Council

Council is the comparison layer. Microsoft says it can run an Anthropic model and an OpenAI model side by side, then use a judge model to distill key findings, highlight agreement and disagreement, and call out what one report surfaced that the other missed. That is much closer to an editorial or analyst workflow than a normal chatbot interaction.

Step-by-Step Flow

1. Define the outcome, not just the question

The workflow starts with a better input. Instead of asking a vague question, the user tells Cowork what finished work should look like. That could be a briefing, a recommendation memo, a project summary, or a next-step plan.

2. Let Cowork break the task into stages

This is where the system starts to feel like a teammate. Planning the steps sounds small, but it is often the hardest part of multistep work. The value is not only that the AI can act. It is that it can organize the work before acting.

3. Pull context from files and conversations

Cowork’s official positioning is heavily grounded in Microsoft 365 context. That means the workflow is strongest when the useful information is already inside your work environment rather than scattered across public web results alone.

4. Use Researcher when the task needs deeper evidence

Not every task needs multi-model analysis. But when the job involves synthesis, citation integrity, or a decision that needs stronger evidence, Researcher becomes the right stage of the workflow.

5. Add Critique or Council before trust-heavy outputs

This is the real operating insight. Critique is better when you want a stronger final report from a coordinated generation-plus-review flow. Council is better when you want explicit comparison across models so you can see where they converge or diverge.

6. Keep the human at the approval boundary

The final action should still belong to a person when the output affects external communication, decision-making, policy, customer messaging, or high-trust internal reporting. That is where the workflow becomes useful without drifting into overclaiming.

Failure Modes

Ambiguous task framing

If the user describes the job poorly, the workflow gets more efficient at doing the wrong thing. Better orchestration does not rescue a bad objective.

Context overload

Access to files and conversations sounds powerful, but it also creates a relevance problem. If the working context is noisy, outdated, or poorly organized, the system may gather a lot of information without improving the final answer.

Model disagreement without decision rules

Council is valuable because it surfaces differences. But differences are only useful if someone knows how to resolve them. A comparison layer without a decision policy can leave teams with more analysis and less clarity.

Overconfidence in multistep execution

The biggest operational risk is assuming that “can plan steps and take actions” means “can be trusted unattended.” That is not what Microsoft’s materials promise. The safer interpretation is assisted workflow execution under supervision.

Availability and rollout constraints

Microsoft places these features inside the Frontier program. That means access, configuration, and admin readiness still matter. A workflow that sounds universal in a demo may still be gated in practice.

Human-in-the-Loop Points

Human review should stay in five places.

- At the objective stage, to define the right outcome and constraints.

- At the evidence stage, to confirm the right files and conversations are being used.

- At the disagreement stage, when Council shows meaningful differences in framing or interpretation.

- At the action stage, before messages, reports, or decisions are finalized.

- At the exception stage, when the workflow touches sensitive, incomplete, or contradictory information.

This is the practical reason the article belongs in Agents rather than News. The real value is not the announcement itself. It is the operating pattern the announcement reveals.

Why Copilot Cowork Matters

Most AI product demos still focus on generation. Copilot Cowork matters because it focuses on work completion. That sounds like a small wording change, but it is the difference between “help me write something” and “help me get this done.”

It also matters because Microsoft is making model diversity part of the workflow. Critique and Council are not only capability boosts. They are a signal that multi-model systems are becoming normal in mainstream work software.

For readers, the takeaway is simple. AI teammates are becoming real not when they sound human, but when they can plan, gather context, compare outputs, and still leave the right decisions to a person. That is what this launch makes easier to picture.

FAQ

What is Copilot Cowork in plain English?

It is Microsoft’s way of turning Copilot into a multistep work assistant. Instead of just answering a prompt, Cowork can help plan and carry out work across files and conversations while the user stays in control.

What is the difference between Critique and Council?

Critique separates generation from review to improve one final output. Council runs multiple models side by side, then uses a judge model to highlight agreement, disagreement, and unique findings.

Does this mean fully autonomous AI coworkers are here?

No. The official materials support a stronger assisted-workflow interpretation, not a “no human needed” claim. The safest and most practical setup is human-supervised execution.

Key Takeaways

- Copilot Cowork turns AI from a reply system into a workflow system.

- Researcher’s Critique and Council features make multi-model review explicit.

- Human approval still matters at the objective, evidence, and action boundaries.

- The strongest reading is practical orchestration, not full automation hype.

Related Reading

- GPT-5.4 for ChatGPT

- Deep Research vs Search vs ChatGPT

- Deep Research Prompt Template

- More AI agent workflow stories