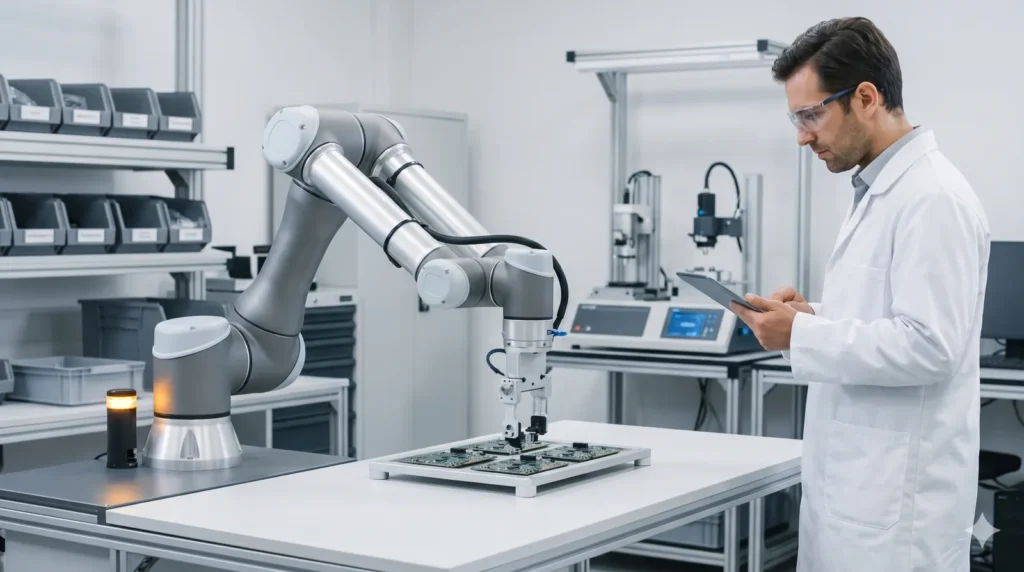

Practical robot AI is still early, but it is starting to look less like a science-fiction promise and more like an operating workflow. That shift is not coming from a single humanoid demo. It is coming from training-data systems, simulation workflows, and platform announcements that make real-world robotics development more repeatable.

NVIDIA’s official newsroom gives a good example of that pattern. On March 16, 2026, the company announced its open Physical AI Data Factory Blueprint, and it framed the release around robotics, vision AI agents, and autonomous vehicle development. That does not prove mass deployment. It does suggest why practical robot AI is starting to feel more grounded.

- Practical robot AI is becoming more believable because the training and simulation pipeline is getting clearer.

- The important story is infrastructure, evaluation, and partner adoption, not consumer robot hype.

- Blueprints and synthetic-data systems do not guarantee safe real-world behavior, but they do make development workflows more concrete.

- The clearest signal is that robotics now has a more visible input-to-simulation-to-deployment path.

Table of Contents

- Workflow Overview

- Architecture and Components

- 5 Signs Practical Robot AI Is Getting Real

- Failure Modes

- Human-in-the-Loop Points

- FAQ

- Related Reading

- Source

Workflow Overview

A realistic practical robot AI workflow starts long before a robot touches the real world. It begins with data generation, model training, simulation, evaluation, and only then moves toward physical deployment. That is why recent platform announcements matter more than isolated robot clips.

When a company shows a repeatable pipeline for generating and evaluating training data at scale, it makes robotics progress easier to reason about. It turns the story from “Look at this robot” into “Here is the system that makes robot improvement more scalable.”

Architecture and Components

1. Synthetic or generated data

NVIDIA says its Physical AI Data Factory Blueprint is designed to generate, augment, and evaluate training data at scale. That is one of the strongest signs of practical robot AI, because physical systems need more than a catchy demo.

2. Foundation models and simulation

The blueprint uses Cosmos open world foundation models and coding agents as part of the workflow. That matters because training and simulation increasingly look like a connected stack rather than isolated tools.

3. Evaluation before deployment

A robot model that cannot be evaluated systematically is hard to trust. Infrastructure that supports evaluation is a stronger practical signal than a flashy showcase.

5 Signs Practical Robot AI Is Getting Real

1. Training-data workflows are becoming explicit

Practical robot AI needs data pipelines. NVIDIA’s blueprint matters because it describes a repeatable architecture instead of only showing the end result.

2. Robotics is being tied to broader AI platforms

The more robotics connects to common model, simulation, and developer infrastructure, the easier it becomes for teams to iterate.

3. Partner activity is becoming part of the story

NVIDIA also points to robotics companies building on its physical AI stack. That ecosystem signal is imperfect, but it usually means the work is moving beyond one lab demo.

4. The conversation is shifting from spectacle to workflow

The strongest practical robot AI signal is when the discussion turns to data, evaluation, and deployment systems instead of humanoid wow moments.

5. Simulation is becoming a first-class part of the process

Real-world robotics is expensive and risky. Better simulation and synthetic-data workflows reduce that friction and make experimentation more realistic.

Failure Modes

- Treating infrastructure announcements as proof that safe real-world deployment is solved.

- Confusing synthetic-data scale with guaranteed robot reliability.

- Reading a developer platform story as if it were a consumer robot launch.

Human-in-the-Loop Points

Human oversight still matters at dataset design, evaluation criteria, safety review, and deployment decisions. That is true even when the platform stack looks stronger.

The right takeaway is not that robots can run alone now. It is that the workflow behind practical robot AI is becoming easier to see and easier to improve.

FAQ

What does practical robot AI mean here?

It means robotics progress that is tied to repeatable workflows such as data generation, simulation, and evaluation rather than pure hype.

Did NVIDIA announce a consumer robot product?

No. The recent announcements are better understood as infrastructure and partner ecosystem updates.

Why does a data factory blueprint matter for robot AI?

Because training, augmenting, and evaluating data at scale is a core part of making robotics development more repeatable.

Does this mean robots are ready for everything?

No. Real-world deployment still faces safety, evaluation, and operational limits.

Related Reading

- Microsoft Declarative Agents: 5 Practical Pilot Rules

- Claude Partner Network: 5 Smart Buying Lessons for AI Teams

- More AI workflow coverage