Deep research prompt template is a more useful topic than another “best AI prompt” list because the real problem is not hype. It is trust. Most weak AI reports fail for familiar reasons: they blur facts and interpretation, hide uncertainty, skip source discipline, and stop before they tell you what to verify next.

This prompt is designed to fix that. It gives deep research a cleaner structure for separating verified facts from interpretation, labeling uncertainty, and ending with follow-up questions instead of fake confidence. If you want AI reports that are easier to trust, edit, and use, this is much more practical than a generic prompt dump.

You are doing deep research for a reader who values clarity, source quality, and practical decision support.

Research question:

[Insert the exact question here]

Goal:

Produce a detailed report that helps the reader understand what is confirmed, what is uncertain, and what matters most.

Instructions:

1. Use the best available sources you can access.

2. Prioritize primary or official sources when possible.

3. Separate facts from interpretation.

4. If a point is not fully confirmed, label it clearly as uncertainty, limitation, or open question.

5. Do not fill gaps with guesses.

6. If sources disagree, show the disagreement and explain why it matters.

7. Use concrete dates whenever timing matters.

8. Keep the writing clear, specific, and free of hype.

Output format:

- Short answer first

- Key confirmed facts

- What is still uncertain

- Why this matters

- Source-backed detail

- Risks, caveats, or blind spots

- 3 next questions the reader should ask next

- Source list with citations or links

Tone:

Smart, practical, and calm. Avoid clickbait wording and overclaiming.Table of Contents

- What This Deep Research Prompt Template Does

- How To Use It

- Why It Works Better Than a Vague Research Prompt

- Useful Variations

- Failure Cases

- FAQ

- Related Reading

- Source

What This Deep Research Prompt Template Does

The prompt improves trustworthiness in four ways.

1. It forces fact separation

Many AI-generated reports sound persuasive because they mix confirmed facts, interpretation, and speculation into one smooth narrative. This template breaks that habit by explicitly asking for confirmed facts and unresolved uncertainty in separate sections.

2. It pushes the model toward source discipline

OpenAI’s deep research materials position the feature as a source-backed research workflow. The prompt reinforces that by asking for primary or official sources when possible and by telling the model not to fill gaps with guesses.

3. It makes timing visible

Deep research outputs get weaker when they talk about “recent” or “latest” without anchoring claims to actual dates. This prompt explicitly asks for concrete dates when timing matters, which helps readers judge freshness and relevance faster.

4. It ends with better next steps

A strong research output should not pretend the first report settled everything. The closing “next questions” section turns the output into a decision tool rather than a false final answer.

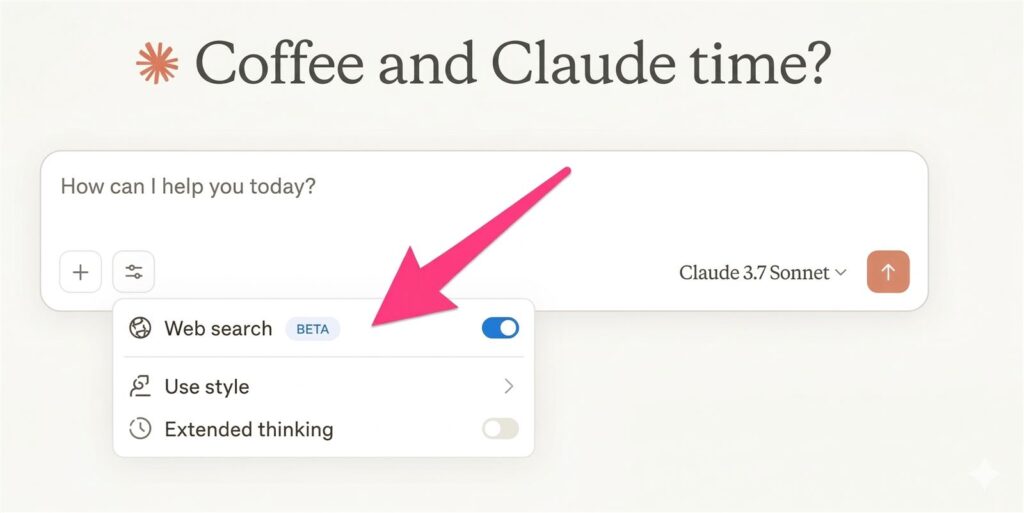

How To Use It

Start by replacing the research question with one narrow, concrete task. “How is the AI agent market changing?” is too broad. “What changed in Microsoft’s March 2026 Copilot Cowork and Researcher rollout, and what does it mean for knowledge workers?” is much better.

Then adjust the goal. If the output is for a purchase decision, add a comparison requirement. If it is for a blog draft, ask for an editorial structure. If it is for an internal memo, ask for a recommendation section.

The most important part is the instruction set. Keep the rules that protect credibility:

- separate facts from interpretation

- label uncertainty clearly

- use concrete dates

- surface disagreements across sources

- avoid invented certainty

Those five rules do more to improve report quality than adding more adjectives or more “be thorough” language.

Why It Works Better Than a Vague Research Prompt

Weak deep research prompting usually sounds like this: “Research this topic and give me a detailed report.” That instruction encourages length, not quality. It does not tell the model how to handle conflicting evidence, uncertain claims, time-sensitive details, or the difference between a fact and an interpretation.

This template works better because it gives the model a reporting contract. The output is not only supposed to be long. It is supposed to be disciplined. That matters for readers because trustworthy writing often feels slightly less flashy than low-quality AI writing. It is more specific, more transparent, and more willing to admit where the evidence is incomplete.

That is also why this prompt fits the Prompts category well. The primary asset is copy-paste-ready text, but the surrounding explanation helps readers understand when the asset works and where it breaks.

Useful Variations

Variation 1: News explainer

Add this to the Goal section:

"Focus on what changed, why it matters, and what readers should watch next. Keep background concise."Variation 2: Tool comparison

Add this to the Output format:

"Include a comparison table covering strengths, weaknesses, pricing or access limits, and best-fit users."Variation 3: Executive memo

Add this to the Output format:

"End with a recommendation: act now, monitor, test, or defer, and explain why."Failure Cases

The question is too broad

No prompt can rescue a topic that is still fuzzy. If the request is too wide, the report becomes generic even when the structure is good.

The source environment is weak

If the task depends on poor sources, the output can still look polished while staying fragile underneath. The prompt improves discipline, but it cannot create strong evidence where none exists.

The user mistakes interpretation for proof

This template explicitly separates confirmed facts from interpretation. Readers still need to respect that boundary. A well-labeled inference is not the same thing as a verified claim.

The task needs urgency, not depth

OpenAI’s help materials say that if time is critical, Search or standard chat may be faster. That is an important limit. Deep research is the wrong tool when the job is mostly a quick lookup.

FAQ

What is the main benefit of this deep research prompt template?

Its main benefit is trust. It pushes the model to separate confirmed facts from uncertainty, use source discipline, and end with next questions instead of fake certainty.

Can this prompt work for any topic?

It works across many topics, but it is strongest when the task needs source-backed synthesis. It is less useful for simple brainstorming or fast factual lookups.

Should readers still review the report manually?

Yes. The prompt improves structure and transparency, but it does not remove the need for human review on important claims, especially time-sensitive, regulated, or high-stakes topics.

Key Takeaways

- A stronger research prompt is about structure, not magic wording.

- Trust improves when facts, interpretation, and uncertainty are separated.

- Concrete dates and source discipline matter more than “be thorough” fluff.

- The best deep research outputs end with follow-up questions, not fake finality.

Related Reading

- Deep Research vs Search vs ChatGPT

- GPT-5.4 for ChatGPT

- Copilot Cowork Explained

- More AI prompt guides